The Question That Arrives Too Late

At some point in almost every major initiative, the same question gets asked. You may have heard it around the second or third steering committee, or when a team is seeking final approval to release funding and formally start work. Occasionally it arrives even later, after the work has already been delivered.

The context varies - culture programs, transformation initiatives, technology investments - but the pattern is remarkably consistent.

In my experience leading culture programs, it usually appears six to twelve months in. By that point the diagnostic work is complete, a culture change roadmap has been assembled, and senior leaders have gradually moved from scepticism to reluctant agreement. The next step is funding.

And that is usually when the question appears.

It takes slightly different forms depending on who asks it, but it usually sounds something like this:

"What is the value that this program will actually deliver?"

In theory, it is a perfectly reasonable question. In practice, the people involved feel their stomachs drop. Not because they're incapable of answering the question, but because to answer honestly risks reopening decisions and design choices that were settled months earlier.

This happens partly because the original intent has often shifted along the way - something I've written about previously. In my example, the work was initially commissioned as an enabler of a strategy: a way of strengthening the conditions required for that strategy to succeed. By the time this question is asked, however, the two efforts have often drifted apart. Culture work is now expected to generate a new, standalone form of value that it was never designed to produce.

The second complication is subtler. The work was usually commissioned to address a problem rather than produce a clearly measurable output. Demonstrating value from the absence of a problem is inherently awkward. Attempts to reframe the discussion tend to surface trade‑offs — about risk tolerance, behaviour, or leadership choices — that most people would prefer not to reopen.

This is where the search for familiar metrics usually begins. In many organisations I am familiar with, the conversation eventually lands on employee engagement scores as a proxy for cultural strength. Complex cultural dynamics are distilled into a score compiled from a handful of questions about advocacy, pride, and retention.

None of this is irrational. It is simply what happens when the question is asked too late.

By the time measurement enters the conversation, the work has already been designed, and what outcomes are plausible has largely been decided by the system.

Why Measurement Gets the Blame

Once the question of value appears, the explanation usually turns quickly toward measurement.

Impact is hard to measure. Attribution is complex. Outcomes take time. But those technical difficulties are only part of the story.

Long before measurement is discussed, a set of structural expectations has already shaped how initiatives were designed, and therefore what value is plausible.

Organisations often assume that if something produced results elsewhere, it will produce the same results here if implemented in a similar way. The design effort then focuses on replicating activity rather than examining the specific conditions that made the original outcome possible.

Many organisations expect initiatives to demonstrate incremental value within normal reporting cycles. Yet many of the outcomes organisations care about — capability, culture, resilience, innovation, efficiency — develop over longer horizons. The pressure to show movement quickly pushes work toward outputs that are visible in the short term rather than conditions that matter in the long term.

Enterprise reporting tends to prefer discrete accountability. Each initiative is expected to demonstrate its own measurable contribution, even when the work functions primarily as an enabler of broader system change. Collective or contributory impact is analytically harder to attribute, so it is often avoided.

Leaders are expected to explain value in simple and familiar terms. Boards, investors, and executive teams want clarity. Yet many organisational initiatives are multi‑faceted, long‑term, and difficult to compress into a single number without losing most of the story.

Together, these factors shape how work is designed: through assumptions about transferability, pressure for short‑term results, demands for discrete ownership, and a preference for simple explanations.

When measurement is later asked to demonstrate impact, it is operating inside those design choices. The response is predictable: invest more effort in measurement, find better indicators, report more frequently, and develop clearer and cleaner definitions of success.

These efforts assume the work was designed in a way that could plausibly produce the intended outcome. That assumption is rarely examined, and even harder to challenge once delivery has begun.

How Impact Becomes Blocked by Structure

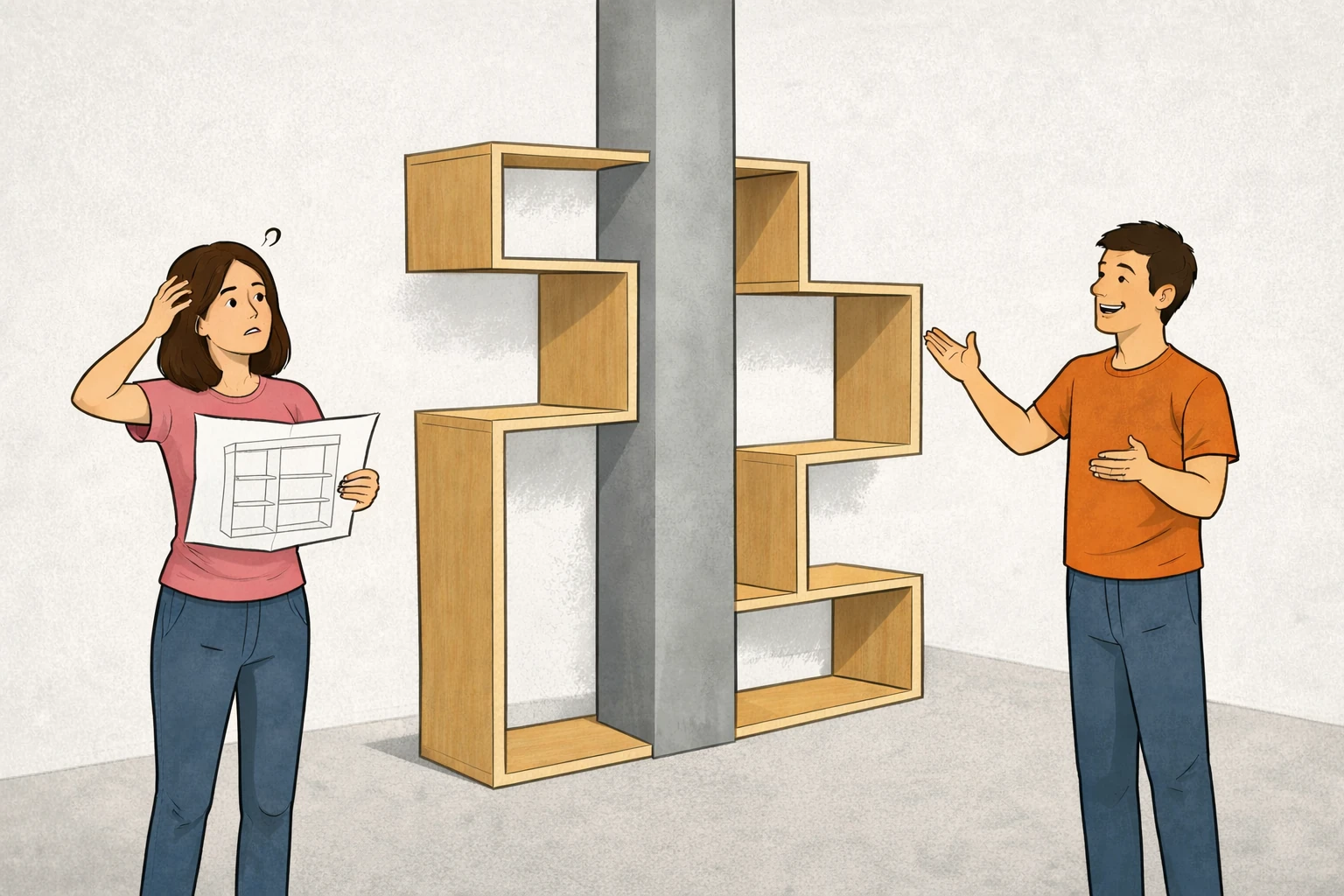

Most initiatives begin with a sensible intent: improve productivity, strengthen safety culture, accelerate innovation, increase customer loyalty. The drift begins when that intent is translated into delivery work organised around what is easiest to govern and monitor: deliverables, milestones, budgets, program structures, and activity.

However, none of these measures guarantee that the conditions required for the intended outcome are actually changing.

Instead, a series of sensible organisational decisions gradually narrow the design space. Programs are scoped as discrete initiatives because funding and governance structures demand clarity. System-level change rarely fits neatly into annual budget cycles.

Outcomes are then assumed rather than engineered, because the link between activity and system behaviour appears obvious at first glance. Of course a new system will improve productivity. Of course a customer retention initiative will increase revenue.

Meanwhile, the broader mechanics of the system are left largely untouched because they sit outside the program’s formal scope. Incentives remain unchanged because altering them creates ripple effects elsewhere. Decision rights stay where they are because redistributing authority invites political friction. Measurement is postponed because the immediate priority is simply getting the work underway.

Each decision is sensible in isolation. Together, however, they produce a structure where activity is carefully governed while outcomes are largely assumed.

When impact later proves difficult to demonstrate, the problem appears analytical.

In reality, it was architectural.

Systems produce outcomes whether they are designed to or not. The only real choice is whether those outcomes are intentional.

When Design Changes the Outcome

If time is spent first translating intent into explicit outcomes, attention naturally shifts to the conditions required for those outcomes to occur.

The design question stops being “what work should we deliver?” and becomes “what must change in the system for this result to become likely?”

Consider the turnaround of Ford under Alan Mulally in the late 2000s.

Mulally didn't solve Ford’s problems by introducing better performance metrics. The company already had extensive reporting. The challenge was that critical problems were surfacing too late for the organisation to respond.

Addressing that outcome required changing the conditions under which problems surfaced inside the leadership system.

What he changed was the personal risk associated with transparency. Weekly business plan reviews forced leaders to surface problems early, and critically, doing so did not trigger punishment.

This altered the choices leaders faced when making decisions. Hiding issues became harder than revealing them. Once that condition changed, coordination improved and corrective action happened earlier.

A similar mechanism appears in the Toyota Production System.

Toyota didn't improve quality by asking managers to monitor defects more carefully. The critical outcome was ensuring that defects were stopped immediately rather than allowed to pass downstream.

Achieving that outcome required redesigning authority on the production line. Any worker could stop the line by pulling the Andon cord if a defect appeared.

That design decision changed the logic of behaviour. Allowing defects to pass became harder than stopping the process to fix them. Quality improved not because defects were measured more carefully, but because the system made defect prevention the rational action.

Measurement could capture improvement only after that behavioural shift had been deliberately engineered.

Even outside manufacturing the pattern repeats. Surgical safety checklists improved outcomes not simply because hospitals introduced a checklist, but because the checklists were designed to restructure the interaction between surgeons, nurses and anaesthetists. Critical safety behaviours were embedded into the workflow itself rather than left to individual vigilance.

Across each of these cases the improvement did not come from measuring results more carefully. It came from redesigning the conditions that produce those results.

Once the system changed, the outcomes followed — and measurement could finally capture the improvement.

Designing Before Delivery

The examples above are not unusual. They simply illustrate what happens when organisations design systems with outcomes in mind.

If impact emerges from system design, the most useful conversations happen earlier than most organisations expect:

Before delivery begins, during the design phase.

Conversations should start with a few uncomfortable questions.

For example:

- What system condition must change for the intended outcome to occur?

- What behaviour needs to become easier — or harder — as a result of this work?

- What trade-offs are we accepting to create that shift?

- Which existing incentives or reporting might undermine the outcome?

- What early signals would tell us the system is moving in the right direction?

These questions rarely appear in project charters, yet they shape whether impact becomes plausible long before the first metric is selected.

They also reveal something organisations often avoid naming explicitly: outcomes sometimes require structural choices.

And structural choices always involve trade-offs.

The Link to Outcomes-Based Thinking

This is where outcomes-based thinking is often misunderstood.

It is frequently treated as a reporting exercise: replacing activity metrics with outcome metrics on a dashboard. When that happens, the work itself rarely changes. Outputs and outcomes blur, leaders spend more time debating definitions than changing the system, and the same initiatives proceed - just with a different set of indicators attached.

The deeper shift is earlier.

Outcomes-based working means designing initiatives around the mechanisms that produce outcomes, not simply measuring them afterwards.

The distinction sounds subtle, but in practice it changes where leaders spend their time and attention.

At this point, business focus shifts from reporting activity to designing consequence.

The Question Behind the Metrics

When organisations struggle to demonstrate impact, the instinct is usually to refine measurement.

Sometimes that helps. Often it doesn’t.

Measurement rarely fails because organisations lack indicators. It fails because it is asked to explain outcomes that were never deliberately designed to occur.

By the time the dashboard appears, the system is already producing the results it was structured to produce.

If impact matters, the question isn’t what should we measure?

It’s what have we actually designed the system to produce?

This article is part of a series exploring why organisations struggle to measure impact and what it takes to design work that actually produces meaningful outcomes.

Impact Measurement Series